Fun fact about me: I didn’t start out studying computer science. Instead, my bachelor was in electronics.

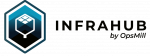

Part of the electronics curriculum (I’m dating myself here!) was learning assembly. Assembly is the lowest-level programming language humans can reasonably write. It consists of cryptic instructions like MOV AX, 0x1234 that directly manipulate a computer's processor and memory. I can tell you from experience it is brutally difficult to learn and read.

Today, almost no one learns assembly, not even in electronics programs. It's been completely abstracted away. That abstraction happened through compilers, tools that take human-friendly code and transform it into machine-executable instructions.

A Python developer writes total = sum(numbers) and the compiler handles the tedious work of translating that into the hundreds of assembly instructions needed to make it happen.

Now we're watching the same pattern repeat, just one level higher. AI is the new compiler.

What is a compiler?

To understand where we're headed, it helps to understand what compilers actually do. They're essentially abstraction engines that let humans work at higher levels of thinking.

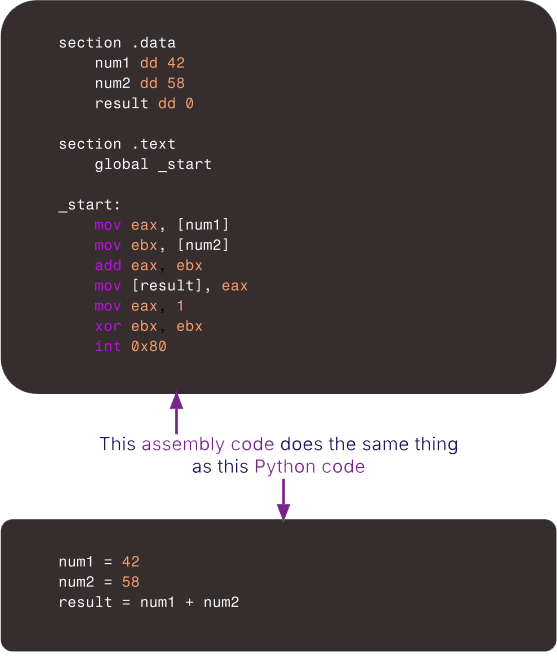

When you write Python code, it goes through several transformation stages:

- Your source code becomes an intermediate representation (IR)

- That IR gets optimized and transformed

- It becomes assembly language

- Finally, it becomes machine code that executes on your processor

Each part of this transformation serves a specific purpose:

Portability: Your Python code runs on Intel x86, Apple Silicon, and ARM processors without modification. The compiler handles architecture-specific details.

Optimization: Tools like LLVM apply dozens of optimization passes at the IR level, like dead code elimination, loop unrolling, and constant folding. These are far easier to do on structured code than on raw instructions.

Debugging: When something breaks, you see a Python stack trace instead of raw memory addresses. Each layer preserves enough information to help you understand what went wrong.

Composability: You can import libraries, use frameworks, and build on existing code. Each layer maintains interfaces that let software components work together.

This is how software has always evolved. React compiles to JavaScript, C compiles to assembly. Each generation of tools creates a higher level of abstraction, letting developers focus on what they want to build rather than how the machine executes it.

When agents write the code

Developer Wes McKinney recently pointed out that, until now, programming languages have been optimized for human readability and ergonomics. But if AI agents write most of the code in the future, what should languages optimize for?

McKinney’s answer, which I completely agree with: compilation speed, portability, and performance. Human-friendliness becomes less critical in the intermediate layers.

This changes everything. Compilers freed us from worrying about registers and memory addresses. Now AI, acting as a new compiler layer, is freeing us from worrying about syntax, algorithms, and implementation details. The stack now looks like this:

You describe what you want in natural language. AI "compiles" that into code. Traditional compilers handle the rest. It's the same fundamental concept that's driven programming for decades: raise the level of abstraction so humans can work at the level of intent rather than implementation.

The evolving language stack

Will this current four-layer stack collapse as AI takes over code generation?

The intuitive answer is yes. If AI generates code and machines execute it, why keep a human-readable layer in between?

We could skip Python and JavaScript entirely and have AI generate an optimized intermediate representation directly, something like LLVM IR or WebAssembly. This bytecode would be designed purely for machines: dense, fast to compile, and portable across architectures.

But I don't think that's how it will play out. And I'm not sure we'd want it to.

There's real value in keeping abstraction layers for AI to build on. It’s the same for programming languages. They represent decades of accumulated thinking about how to structure computation, handle errors, and compose complex systems. That's a foundation worth preserving.

The more likely future is that programming languages persist, but evolve away from human readability. Today's languages were optimized for humans to read and write. Tomorrow's may be optimized for AI agents to generate and compilers to consume, prioritizing fast compilation, portability, and performance over legibility.

These new intermediate languages might be as incomprehensible to most developers as assembly code is today. And that's fine.

Just as Python developers don't need to understand assembly, tomorrow's builders won't need to understand the code their AI compiler generates. Humans will continue to interact at the natural language level, and the layers below will increasingly become the domain of machines.

Could we skip even further and go straight from natural language to machine code?

Probably not. That would mean the AI compiler needs to understand every hardware architecture deeply, re-"compile" for every different processor, and replicate decades of optimization work that tools like LLVM provide. It would lose portability, optimization infrastructure, and debuggability. Those are all benefits that make intermediate representations valuable.

So my prediction is that the language stack won't get smaller but it will change significantly.

Plus ça change (aka we’ve been here before)

Every generation of developers has learned to trust the compiler. We’ve been defining at higher levels what we want to build, and we trust that the layer below handles the details correctly.

Assembly programmers had to trust C compilers. C programmers had to trust garbage collectors and interpreters. Now we're learning to trust AI as a compiler to take our natural language specifications and generate correct, efficient code.*

Programming has always been about translating human intent into machine execution. With AI, we’re adding one more layer to an age-old model to make that translation more natural.

When I was writing assembly code in electronics school, I had to think in terms of registers, memory addresses, and jump instructions. Today's developers think in terms of functions, objects, and data structures. My prediction is that tomorrow's builders will think in terms of outcomes, behaviors, and user experiences. And the AI compiler will handle the rest.

*Of course, we’ll need some time and evolution for AI to get to a place where we can implicitly trust it to do that compilation correctly. 😉